Beyond the lab: human motion analysis with sports machines using smartphones

Más allá del laboratorio: análisis del movimiento humano con máquinas deportivas utilizando ‘smartphones’

Rosa Pàmies-Vilà (1), Lluïsa Jordi Nebot (1), Joan Puig-Ortiz (1)

Abstract

For the analysis of human motion, researchers typically turn to biomechanics laboratories equipped with infrared cameras to capture the positions of reflective markers placed on anatomical points of the subject. When motion analysis is conducted outside the laboratory (outdoors or in homes), inertial sensors are commonly employed. The introduction of OpenCap application for iOS devices in October 2022 could represent a significant revolution in motion capture. This application, developed by the same creators of OpenSim, allows for obtaining the joint kinematics of a subject’s movement without the need for markers or infrared cameras. It requires a minimum of two iOS devices (iPhone, iPad, or iPod) placed on tripods, a calibration board, and a third device to run the OpenCap web application. Kinematic features are derived using the OpenPose and HRNet algorithms, as well as inverse kinematics in OpenSim. OpenCap web application enables users to collect synchronized videos and visualize motion data that is processed automatically in the cloud, thus eliminating the need for specialized hardware. This work goes a step further and focuses on the use of OpenCap and OpenSim to analyse kinematic motion performed on exercise machines. Unlike most previous studies that are limited to modelling only the subject without the ability to incorporate other elements, our approach includes the modelling of gym machines and the recording of data in an uncontrolled environment, allowing exploration of the capabilities of these tools in the recreational and sports domain. In this work, we present the results obtained by using the OpenCap and OpenSim applications to analyse motion performed on two exercise machines located outside a biomechanics laboratory. While challenges were identified in modelling the person-machine interaction, this approach shows potential for enhancing measurement procedures and opening new research possibilities in the sports field.

Keywords: Motion capture, smartphones, OpenCap, sports machines.

Resumen

Para el análisis del movimiento humano, usualmente se recurre a los laboratorios de biomecánica que están equipados con cámaras de infrarrojos para capturar la posición de marcadores reflectantes situados en puntos anatómicos del sujeto. Habitualmente, cuando el análisis del movimiento se realiza fuera del laboratorio (al aire libre o en viviendas) se utilizan sensores inerciales. La aparición de la aplicación OpenCap para dispositivos iOS, en octubre de 2022, puede representar una gran revolución en la captura de movimientos. Esta aplicación, de los mismos desarrolladores de OpenSim, permite obtener la cinemática articular del movimiento de un sujeto sin la necesidad de utilizar marcadores ni cámaras de infrarrojos. Requiere un mínimo de dos dispositivos iOS (iPhone, iPad o iPod) colocados en trípodes, un tablero de calibración y un tercer dispositivo para ejecutar la aplicación web de OpenCap. Las características cinemáticas se derivan utilizando los algoritmos OpenPose y HRNet, así como la cinemática inversa en OpenSim. La aplicación web de OpenCap permite a los usuarios recopilar vídeos sincronizados y visualizar datos de movimiento que se procesan automáticamente en la nube, eliminando así la necesidad de hardware especializado. Este trabajo va un paso más allá y se centra en el uso de OpenCap y OpenSim para analizar el movimiento cinemático realizado en máquinas deportivas. A diferencia de la mayoría de los estudios previos, que se limitan a modelar solo al sujeto sin la capacidad de agregar otros elementos, nuestro enfoque incluye el modelado de las máquinas de gimnasio y la realización de grabaciones en un entorno no controlado, lo que permite explorar las capacidades de estas herramientas en el ámbito recreativo y deportivo. En el trabajo, se presentan los resultados obtenidos mediante la utilización de las aplicaciones OpenCap y OpenSim en el análisis del movimiento realizado en dos máquinas deportivas situadas fuera de un laboratorio biomecánico. Si bien se identificaron desafíos en la modelación de la interacción persona-máquina, este enfoque muestra potencial para mejorar los procedimientos de medición y abrir nuevas posibilidades de investigación en el ámbito deportivo.

Palabras clave: Captura del movimiento, smartphones, OpenCap, máquinas deportivas.

Recibido/received: 01/06/2023 Aceptado/accepted: 10/10/2023

1Department of Mechanical Engineering. Universitat Politècnica de Catalunya.

Corresponding authors: ro*********@*pc.edu; ll**********@*pc.edu; jo*******@*pc.edu

1. Introduction

Biomechanical analysis of human motion provides quantitative information about the muscular and skeletal system during the execution of specific movements. Data capture systems are key tools for obtaining objective, quantified, reproducible, and accurate measurements of the movements to be analysed. In laboratory settings, infrared cameras are often used to capture the position of reflective markers placed on anatomical points of the subject. However, outside the laboratory, such as in outdoor environments or homes, inertial sensors (IMUs) are commonly used, which combine accelerometers, gyroscopes, and magnetometers. Although IMUs offer a convenient alternative, they have limitations in terms of measurement accuracy, which can be affected by external factors such as electromagnetic interference, vibrations, calibration, and drift issues over time.

Recently, the emergence of the OpenCap application (Uhlrich et al., 2022), for iOS devices in October 2022 has raised the possibility of a revolution in motion capture. Developed by the same team responsible for OpenSim (Delp et al., 2007), OpenCap allows for obtaining the joint kinematics of a subject’s movement without the need for markers or infrared cameras. To implement it, a minimum of two iOS devices (iPhone, iPad, or iPod) placed on tripods, a calibration board, and a third device to run the OpenCap web application are required. Kinematic characteristics are derived using the OpenPose and HRNet algorithms, as well as inverse kinematics in OpenSim.

The main goal of this study is to demonstrate the feasibility of conducting biomechanical experiments outside a laboratory when the subject interacts with other elements. More specifically, we investigate the application of this methodology in scenarios that involve exercise machines. This unique aspect introduces an extra complexity to motion capture, as the cameras may encounter obstructions in certain segments of the analysis due to the presence of these machines.

2. Methods

This work presents two distinct case studies. The first one involves a subject using a gym machine to exercise the gastrocnemius muscle, and the second one involves a subject using an outdoor bio-healthy sports machine. For both cases, it is necessary to first model the components of the sports machine and edit the musculoskeletal model to incorporate these new components. The final part of this section also explains the methodology for preparing motion capture outside the laboratory.

2.1. Machines Modelling

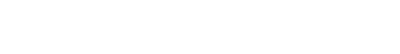

The modelling of both machines was carried out using the SolidWorks software based on measurements taken from the existing machines. In both cases, the machines consist of a fixed base attached to the ground and a movable component.

Figure 1 shows the model of the gym machine. The first assembly is fixed to the ground (figure 1a), and the part containing the seat is movable (figure 1b). Similarly, figure 2 displays the outdoor biohealthy sports machine, consisting of a fixed part (figure 2a) and a movable part (figure 2b). Both figures include an image of the commercial model.

2.2. Musculoskeletal model’s edition

OpenCap uses the musculoskeletal model developed by Rajagopal et al. (2016), consisting of 21 segments and 33 degrees of freedom (pelvis in ground reference [6], hips [2×3], knees [2×1], ankles [2×2], metatarsophalangeal joints [2×1], lumbar region [3], shoulders [2×3], and elbows [2×2]).

In the scope of this study, our objective is to extend the modelling approach to incorporate the machine components. Therefore, it is necessary to modify the human model to include the two new solid components of each machine, importing their geometries from STL files generated through SolidWorks.

In addition, two extra joints have been introduced in the model. A PinJoint, which is a revolute joint connecting the two machine components, has been incorporated in both machine models. Moreover, in the case of the gym machine, a WeldJoint links the machine to the right femur, while in the biohealthy sports machine, it connects the pelvis to the mobile part.

2.3. Configuration and preparation for motion capture

To carry out the desired motion capture, the following equipment is required: a laptop computer, a reliable internet connection, a minimum of two iOS devices manufactured from 2018 onwards (iPhone or iPad), the installation of the Test Flight application, tripods equipped with mobile device mounts for each unit, and A4-sized printed chessboard supplied by OpenCap.

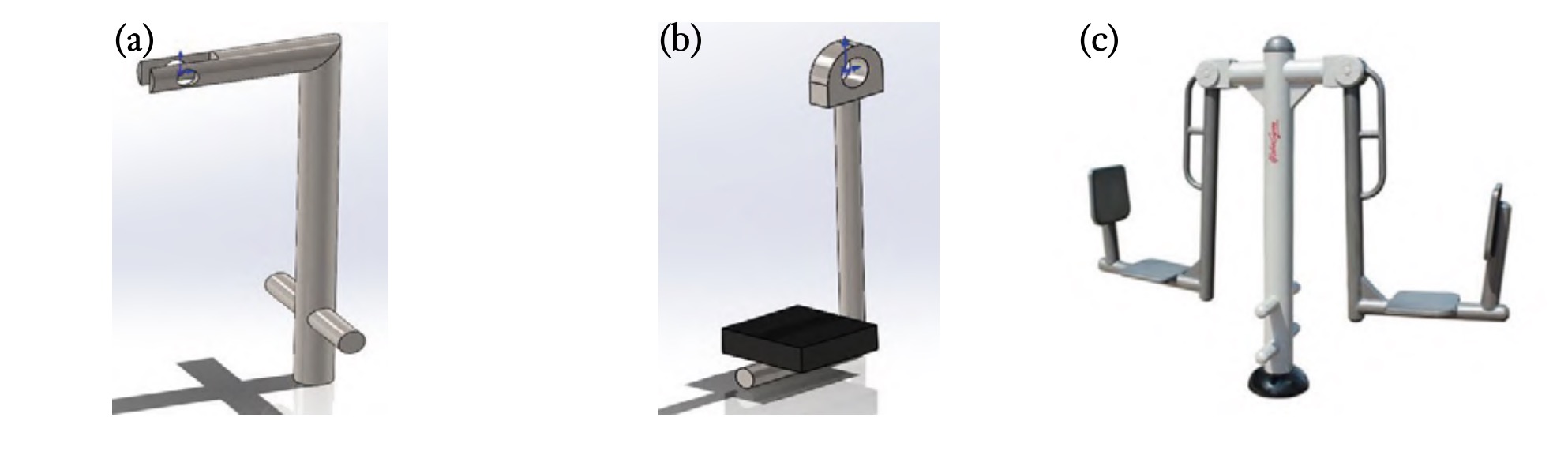

After selecting the equipment and a suitable recording environment, the cameras must be positioned on their tripods to capture the entire motion on both devices. To ensure a complete view of the motion and the subject, the cameras should be placed at a height between 1 metre and 1.5 metres.

In both of the analysed scenarios, this configuration ensures that the cameras comprehensively cover the entire range of motion without the subject moving beyond the field of view of the devices. It is important to note that the selected configuration should also allow for the capture of the subject in a standing position, which is necessary for calibration purposes. Regarding between the cameras, the subject, and the machinery under investigation, it must be such that both the subject and the machinery are entirely within the range of the cameras. In the case of this study, this distance was between 2 metres and 4 metres. Concerning the angles between the cameras, it is recommended not to place them in a purely frontal or lateral position concerning the subject and the machinery, as this would result in obstructions to the limbs and adversely affect motion capture. Recommendations suggest that the recording angles should be between 30 degrees and 45 degrees relative to the front of the object under study.

To calibrate the cameras, the chessboard should be placed in the recording scene. It must be visible to all cameras, perpendicular to the ground, and located within the desired capture volume. Figure 3 depicts the capture setup for the gym machine. The calibration process is carried out following a step-by-step guide provided on the OpenCap website.

Prior to starting the recording process, it is necessary to calibrate the subject’s model. For this purpose, basic anthropometric data, including gender, height, and mass, must be entered into the OpenCap software. The participant is required to stand with arms extended at an angular range of 20 degrees to 25 degrees relative to their trunk. Initiating the calibration process within OpenCap generates a virtual model of the subject, forming the basis for subsequent analytical procedures.

From this point, users can record any movement using the OpenCap web application. OpenCap employs a set of 3D key points derived from recorded videos and a Long ShortTerm Memory (LSTM) network to compute joint kinematics using the Inverse Kinematics tool of OpenSim and the scaled musculoskeletal model (Uhlrich et al., 2022). Users can visualise the resulting threedimensional kinematics within the web application.

3. Results

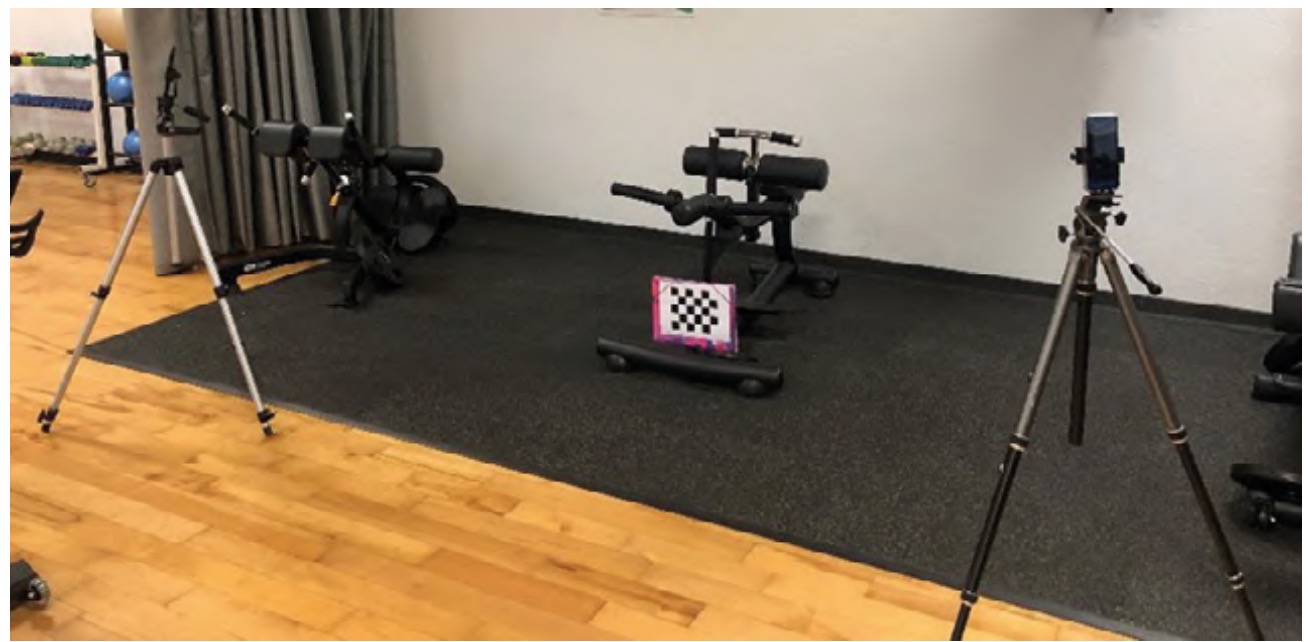

Figure 4 illustrates, for the two cases under examination, a real image and its representation in OpenSim.

OpenCap is capable of capturing the temporal evolution of the 28 relative joint angles of the human model and the 6 degrees of freedom that position and orient the pelvis with respect to the ground in an absolute manner. Additionally, including the machine in the overall model introduces a new constraint between the subject and the ground (via the machine). With the new subject-machine models and motion captures from OpenCap, kinematics of the entire system can be obtained in OpenSim using the AnalyzeTool.

One of the key challenges is the precise determination of the relative positioning of the subject concerning the machine, i.e., the subject’s posture on the machine. The OpenCap model does not offer this information, leaving the sole option of measuring the distance between an anthropometric point on the individual and a distinctive point on the machine during motion capture. Furthermore, it is unclear how the subject’s outfit (and its contrast with the environment) may influence the obtained results.

This section addresses these two challenges. In the case of the gym machine, changes in the joint angles of the legs are analysed based on the clothing worn by the user. Secondly, in the outdoor machine model, the kinematics are examined in relation to the definition of the subject-machine connection.

3.1. Gym machine

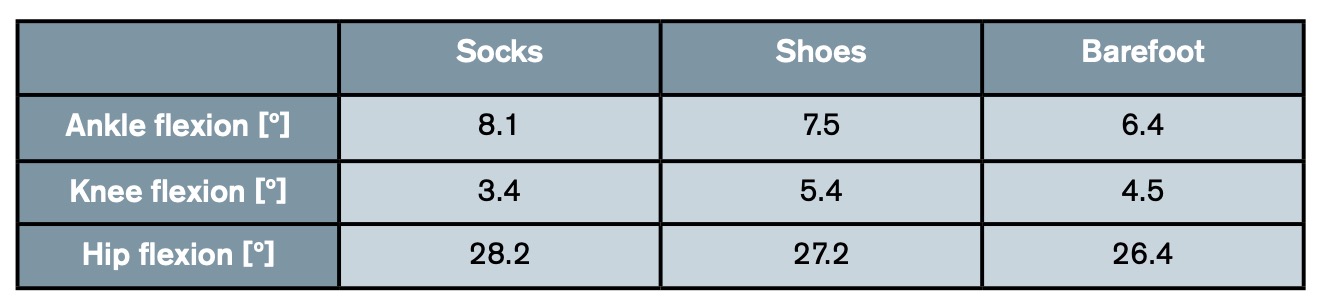

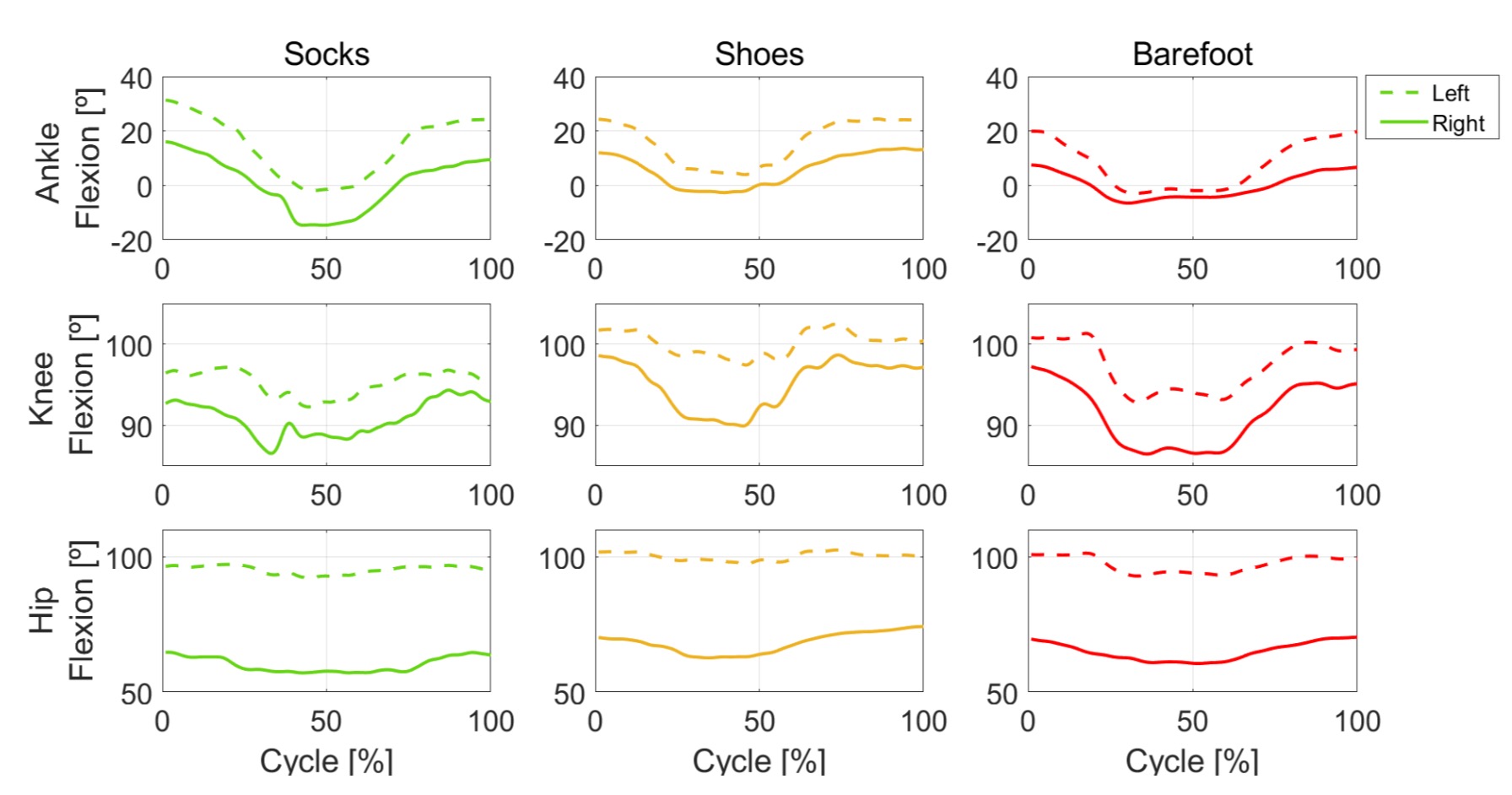

During the capture motion procedure, challenges arose in precisely capturing the positioning of the feet. As a result, we analyse the join kinematic results in three distinct scenarios: when the subject was wearing socks, when he had shoes on, and when he was barefoot. Figure 5 shows the evolution of ankle, knee, and hip flexion angles for both legs in these three situations. The mean absolute difference between the joint angle of each leg has been calculated, and the values are presented in table 1.

Based on the presented results, it can be observed that the most symmetrical movement occurred when the subject was barefoot. Notably, significant differences were observed, particularly in hip flexion, across all three study situations. The difference of over 25 degrees can be attributed to the inclusion of the machine in the model. The machine’s moving part is fixed to the right leg, thereby affecting this angle due to a new constraint (leg-machine-ground connection). These findings raise questions about the accuracy of the subject-machine connection. Two potential sources of error are identified: firstly, the machine was rigidly attached (via a welded joint) to the subject’s leg, whereas in reality, it involves significant soft tissue. Secondly, the precise subject-machine relative position is unknown, and the results are highly dependent on this positioning, as will be explored in the following section.

3.2. Bio-healthy outdoor sports machine

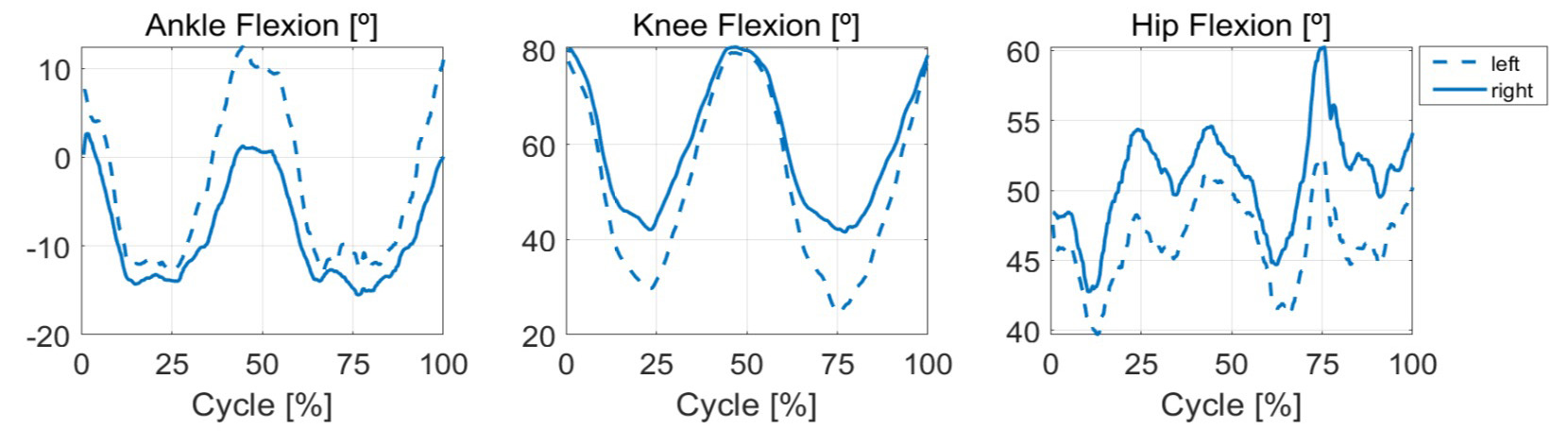

The outlined methodology allows for the determination of the subject’s joint angles during exercise on the outdoor sports machine. In figure 6, we have graphed the ankle, knee, and hip flexion angles for both legs. The mean absolute differences between the two curves are 6.2 degrees for the ankle, 9.1 degrees for the knee, and 4.9 degrees for the hip.

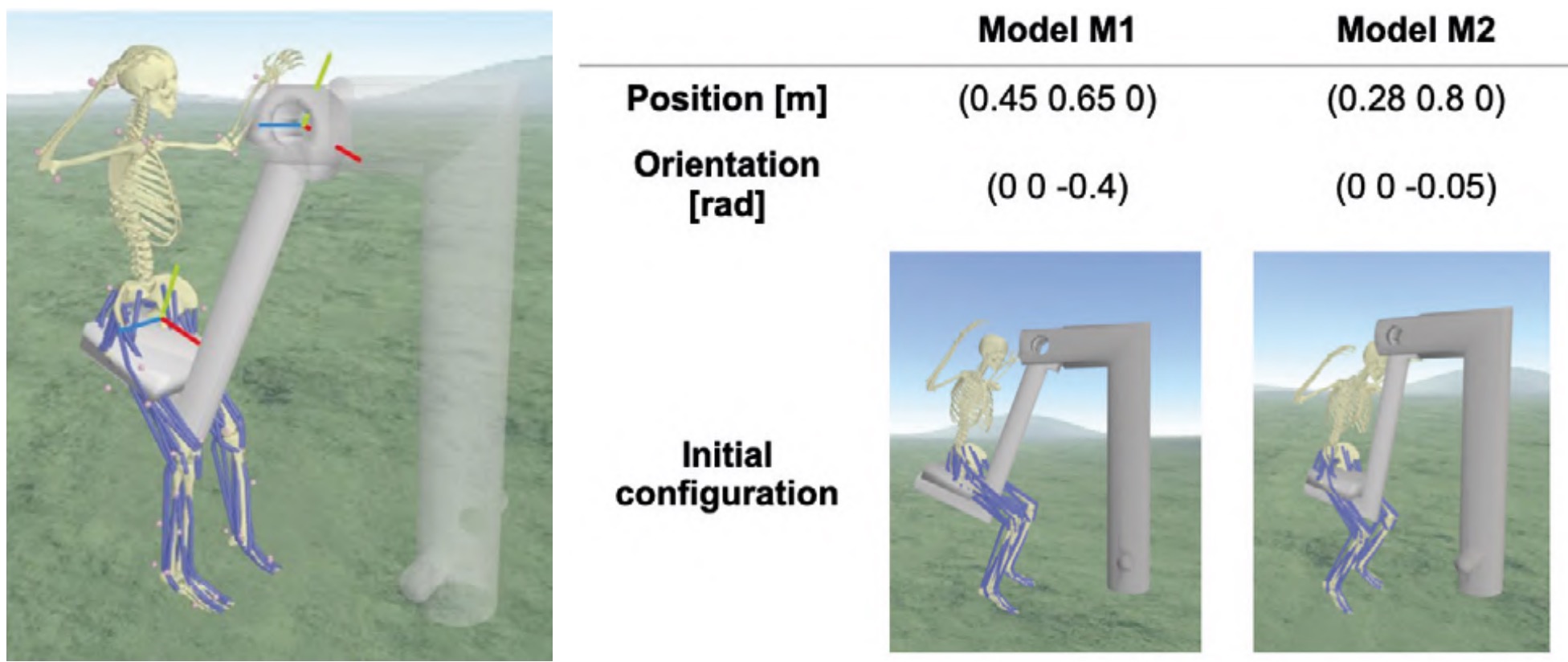

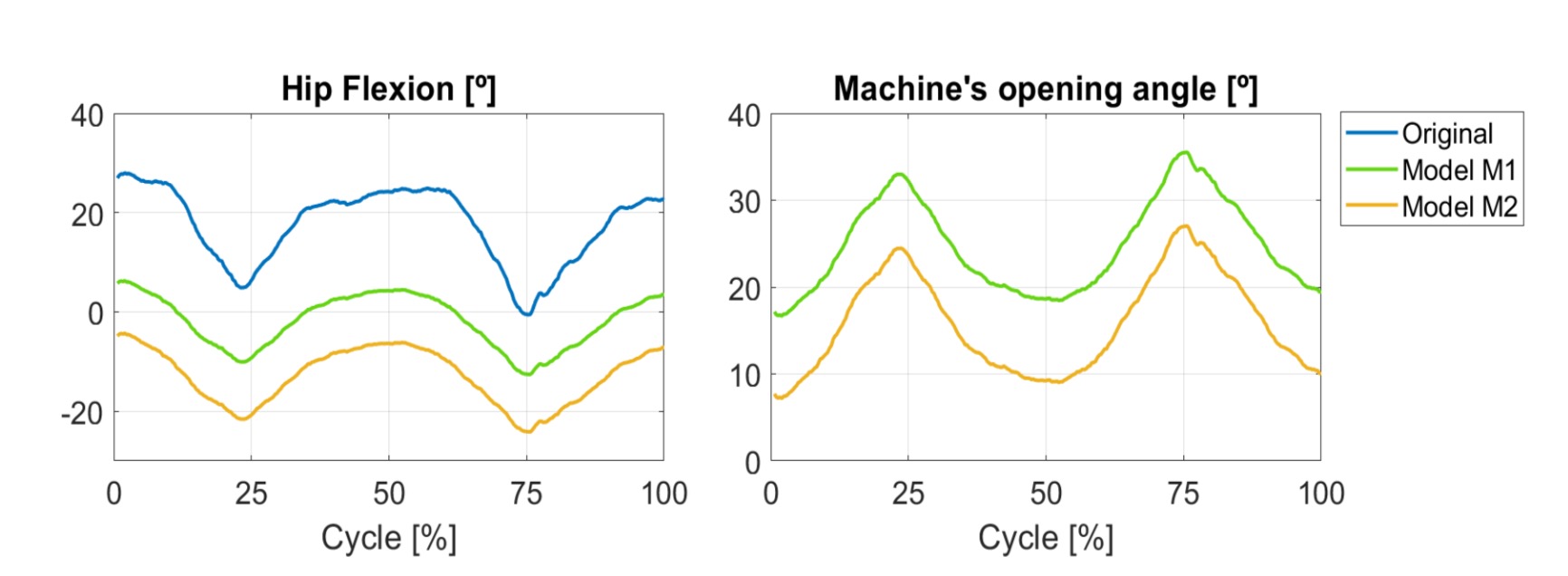

In this case, the incorporation of the machine into the model does not affect the relative joint angles. The moving part of the machine is fixed to the solid pelvis, which serves as the base of the model (providing absolute position and orientation with respect to the ground). Consequently, a new constraint is introduced, connecting the pelvis-machine-ground, which does not alter the orientation of the human model’s limbs but does impact the absolute orientation angle of the pelvis with respect to the ground. This study investigates how the kinematic results vary based on the parametrization of this connection. A welded joint links the pelvis to the mobile part of the machine. To define this connection, it is necessary to specify the relative position and orientation of the machine frame (with origin where the two machine components connect) with respect to the pelvis frame (refer to figure 7 for clarification). To illustrate the significance of this connection, two models (M1 and M2) have been created with the parameters displayed in figure 7. These parameters define the coordinates of the machine origin point, expressed on the pelvis frame (in metres) and its orientation (in radians). The sequence of these coordinates aligns with the RGB frame in the image (red, green, blue).

Conducting a kinematic analysis with the incorporation of the machine allows for the determination of the machine opening angle and the evaluation of its impact on the pelvic flexion angle. Figure 8a) presents these angles for the base model (without the machine) and for the two proposed connections (Models M1 and M2). In figure 8b), the machine opening angle can be observed. In the actual machine, this angle is 0 when the machine is in its equilibrium position (closed), and it increases as the subject initiates the movement. Figure 8a shows a significant alteration in the absolute pelvic flexion angle, with the alteration being more pronounced in the case of the M2 machine model (mean absolute difference of 19.4 degrees for M1 and 30.4 degrees for M2). Therefore, model M1 appears to closely approximate the actual movement. However, when considering the machine opening angle, M2 more faithfully replicates the genuine machine motion, with the initial and final angles approaching the zero angle it should have in the closed position. These disparities in the initial position of the two models are also visible in figure 7.

4. Discussion and conclusions

In this study, we have presented the results of our investigation using the OpenCap and OpenSim applications to analyse kinematic motion performed on two outdoor sports machines, in a setting outside the traditional biomechanics laboratory. Until now, most motion capture studies using markerless videographic images have focused solely on modelling the human subject, often neglecting the integration of additional elements (Uhlrich et al., 2022; Van Hooren et al., 2023). In contrast, our work stands out for its inclusion of the modelling of two gym machines and for conducting recordings outside the controlled laboratory environment.

It is important to note that our objective was not to validate the image processing for obtaining joint angles, as this has been analysed by other researchers who reported errors ranging from 2 degrees to 10 degrees in joint angles (Uhlrich et al., 2022). Instead, our focus was on exploring the potential applications of these tools in more recreational contexts, specifically within the realm of gym-based sports techniques.

Our findings show up that the clothing worn does not significantly impact the accuracy of markerless motion capture, in line with previous research that arrived at the same conclusion (Keller et al., 2022). Furthermore, our observations revealed that the key challenge when incorporating machine elements into the analyses lies in achieving a precise modelling of the interaction between the subject and the machine. Further studies in this direction are necessary to make progress in this regard.

Despite these inherent limitations, our work emphasizes that this innovative technique can be applied beyond controlled environments. Although there is still much to explore, the combined use of CAD software and the OpenCap and OpenSim applications has the potential to expedite data collection procedures and simplify field measurements. Consequently, this development presents promising prospects for future research initiatives in the domains of sports and recreation.

5. Acknowledgments

The authors extend their thanks to Eric Veciana Pérez and Eric Fernández Cintas, students whose contributions to obtaining the motion capture data have been crucial. Their invaluable collaboration played a pivotal role in the achievement of this research.

References

Delp, S. L., Anderson, F. C., Arnold, A. S., Loan, P., Habib, A., John, C. T., Guendelman, E., & Thelen, D. G. (2007). OpenSim: OpenSource Software to Create and Analyze Dynamic Simulations of Movement. IEEE Transactions on Biomedical Engineering, 54(11), 1940–1950.https://doi. org/10.1109/tbme.2007.901024

Keller, V. T., Jereme Outerleys, Kanko, R., Laende, E. K., & Deluzio, K. J. (2022). Clothing condition does not affect meaningful clinical interpretation in markerless motion capture. Journal of Biomechanics, 141, 111182– 111182. https://doi.org/10.1016/j. jbiomech.2022.111182

Rajagopal, A., Dembia, C. L., DeMers, M. S., Delp, D. D., Hicks, J. L., & Delp, S. L. (2016). Full-Body Musculoskeletal Model for MuscleDriven Simulation of Human Gait. IEEE Transactions on Biomedical Engineering, 63(10), 2068– 2079. https://doi.org/10.1109/ tbme.2016.2586891

Uhlrich, S. D., Falisse, A., Kidzi?ski, ?., Muccini, J., Ko, M., Chaudhari, A. S., Hicks, J. L., & Delp, S. L. (2022). OpenCap: 3D human movement dynamics from smartphone videos. https://doi. org/10.1101/2022.07.07.499061

Van Hooren, B., Pecasse, N., Meijer, K., & Essers, J. M. N. (2023). The accuracy of markerless motion capture combined with computer vision techniques for measuring running kinematics. Scandinavian Journal of Medicine & Science in Sports. https://doi.org/10.1111/ sms.14319